From 790043364026835b0d834b165b1a65f7323cb6f1 Mon Sep 17 00:00:00 2001

From: 游雁 <zhifu.gzf@alibaba-inc.com>

Date: 星期三, 16 十月 2024 14:31:31 +0800

Subject: [PATCH] funasr tables

---

docs/tutorial/README_zh.md | 55 +++++++

docs/tutorial/Tables_zh.md | 322 ++++++++++++++++++++++++++++++++++++++++++++++

2 files changed, 377 insertions(+), 0 deletions(-)

diff --git a/docs/tutorial/README_zh.md b/docs/tutorial/README_zh.md

index 7b78506..85c1950 100644

--- a/docs/tutorial/README_zh.md

+++ b/docs/tutorial/README_zh.md

@@ -7,6 +7,7 @@

<a href="#妯″瀷鎺ㄧ悊"> 妯″瀷鎺ㄧ悊 </a>

锝�<a href="#妯″瀷璁粌涓庢祴璇�"> 妯″瀷璁粌涓庢祴璇� </a>

锝�<a href="#妯″瀷瀵煎嚭涓庢祴璇�"> 妯″瀷瀵煎嚭涓庢祴璇� </a>

+锝�<a href="#鏂版ā鍨嬫敞鍐屾暀绋�"> 鏂版ā鍨嬫敞鍐屾暀绋� </a>

</h4>

</div>

@@ -434,3 +435,57 @@

```

鏇村渚嬪瓙璇峰弬鑰� [鏍蜂緥](https://github.com/alibaba-damo-academy/FunASR/tree/main/runtime/python/onnxruntime)

+

+<a name="鏂版ā鍨嬫敞鍐屾暀绋�"></a>

+## 鏂版ā鍨嬫敞鍐屾暀绋�

+

+

+### 鏌ョ湅娉ㄥ唽琛�

+

+```python

+from funasr.register import tables

+

+tables.print()

+```

+

+鏀寔鏌ョ湅鎸囧畾绫诲瀷鐨勬敞鍐岃〃锛歚tables.print("model")`

+

+

+### 娉ㄥ唽鏂版ā鍨�

+

+```python

+from funasr.register import tables

+

+@tables.register("model_classes", "MinMo_S2T")

+class MinMo_S2T(nn.Module):

+ def __init__(*args, **kwargs):

+ ...

+

+ def forward(

+ self,

+ **kwargs,

+ ):

+

+ def inference(

+ self,

+ data_in,

+ data_lengths=None,

+ key: list = None,

+ tokenizer=None,

+ frontend=None,

+ **kwargs,

+ ):

+ ...

+

+```

+

+鐒跺悗鍦╟onfig.yaml涓寚瀹氭柊娉ㄥ唽妯″瀷

+

+```yaml

+model: MinMo_S2T

+model_conf:

+ ...

+```

+

+

+[鏇村璇︾粏鏁欑▼鏂囨。](https://github.com/alibaba-damo-academy/FunASR/docs/tutorial/Tables_zh.md)

\ No newline at end of file

diff --git a/docs/tutorial/Tables_zh.md b/docs/tutorial/Tables_zh.md

new file mode 100644

index 0000000..ec64baf

--- /dev/null

+++ b/docs/tutorial/Tables_zh.md

@@ -0,0 +1,322 @@

+# FunASR-1.x.x 娉ㄥ唽妯″瀷鏁欑▼

+

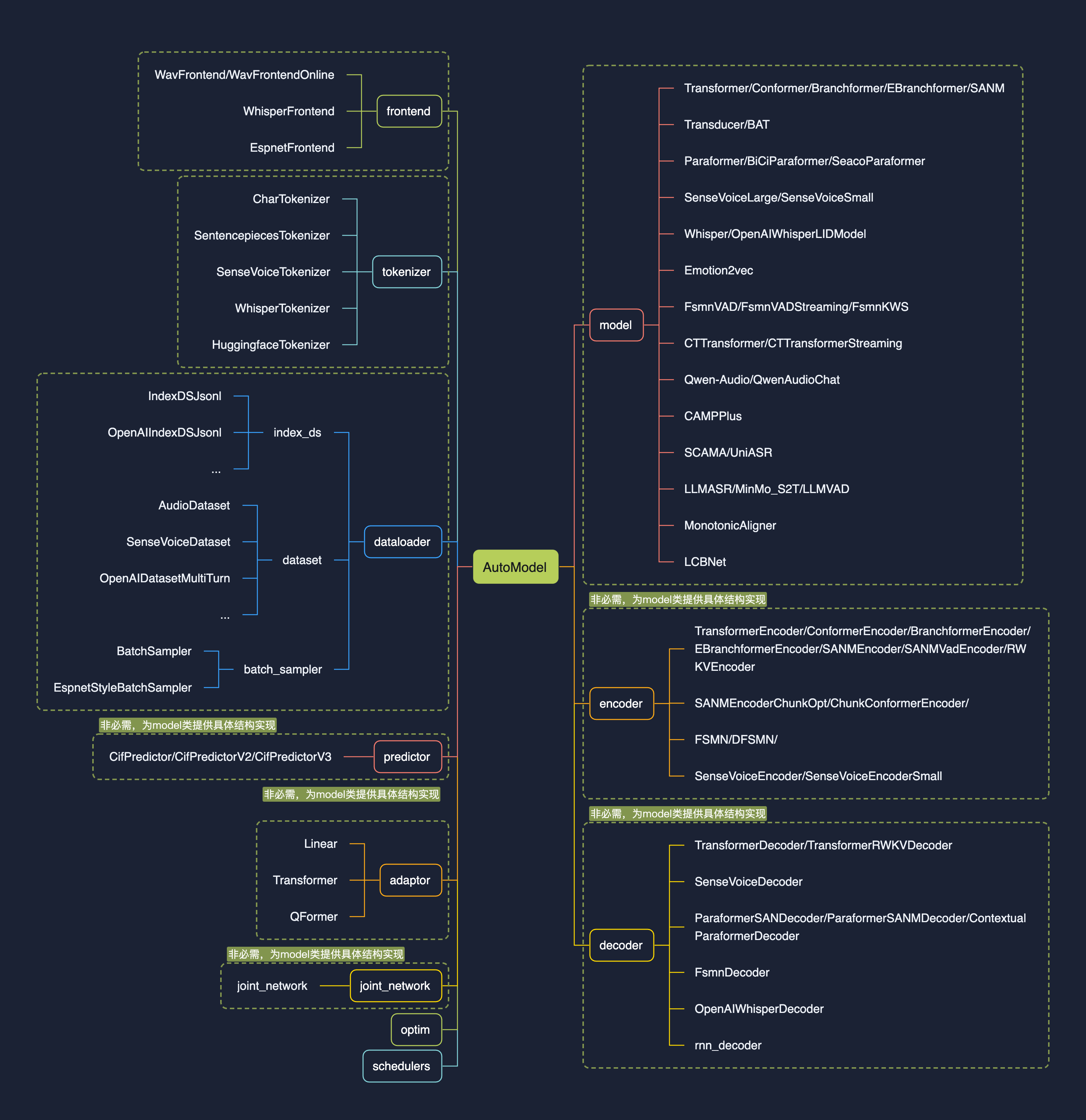

+1.0鐗堟湰鐨勮璁″垵琛锋槸銆�**璁╂ā鍨嬮泦鎴愭洿绠�鍗�**銆戯紝鏍稿績feature涓烘敞鍐岃〃涓嶢utoModel锛�

+

+* 娉ㄥ唽琛ㄧ殑寮曞叆锛屼娇寰楀紑鍙戜腑鍙互鐢ㄦ惌绉湪鐨勬柟寮忔帴鍏ユā鍨嬶紝鍏煎澶氱task锛�

+

+* 鏂拌璁$殑AutoModel鎺ュ彛锛岀粺涓�modelscope銆乭uggingface涓巉unasr鎺ㄧ悊涓庤缁冩帴鍙o紝鏀寔鑷敱閫夋嫨涓嬭浇浠撳簱锛�

+

+* 鏀寔妯″瀷瀵煎嚭锛宒emo绾у埆鏈嶅姟閮ㄧ讲锛屼互鍙婂伐涓氱骇鍒骞跺彂鏈嶅姟閮ㄧ讲锛�

+

+* 缁熶竴瀛︽湳涓庡伐涓氭ā鍨嬫帹鐞嗚缁冭剼鏈紱

+

+

+

+

+# 蹇�熶笂鎵�

+

+## 鍩轰簬automodel鐢ㄦ硶

+

+### Paraformer妯″瀷

+

+杈撳叆浠绘剰鏃堕暱璇煶锛岃緭鍑轰负璇煶鍐呭瀵瑰簲鏂囧瓧锛屾枃瀛楀叿鏈夋爣鐐规柇鍙ワ紝瀛楃骇鍒椂闂存埑锛屼互鍙婅璇濅汉韬唤銆�

+

+```python

+from funasr import AutoModel

+

+model = AutoModel(model="paraformer-zh",

+ vad_model="fsmn-vad",

+ vad_kwargs={"max_single_segment_time": 60000},

+ punc_model="ct-punc",

+ # spk_model="cam++"

+ )

+wav_file = f"{model.model_path}/example/asr_example.wav"

+res = model.generate(input=wav_file, batch_size_s=300, batch_size_threshold_s=60, hotword='榄旀惌')

+print(res)

+```

+

+### SenseVoiceSmall妯″瀷

+

+杈撳叆浠绘剰鏃堕暱璇煶锛岃緭鍑轰负璇煶鍐呭瀵瑰簲鏂囧瓧锛屾枃瀛楀叿鏈夋爣鐐规柇鍙ワ紝鏀寔涓嫳鏃ョ菠闊�5涓瑷�銆傘�愬瓧绾у埆鏃堕棿鎴筹紝浠ュ強璇磋瘽浜鸿韩浠姐�戝悗缁細鏀寔銆�

+

+```python

+from funasr import AutoModel

+from funasr.utils.postprocess_utils import rich_transcription_postprocess

+

+model = AutoModel(

+ model="iic/SenseVoiceSmall",

+ vad_model="fsmn-vad",

+ vad_kwargs={"max_single_segment_time": 30000},

+ device="cuda:0",

+)

+

+res = model.generate(

+ input=f"{model.model_path}/example/en.mp3",

+ language="auto", # "zn", "en", "yue", "ja", "ko", "nospeech"

+ use_itn=True,

+ batch_size_s=60,

+)

+text = rich_transcription_postprocess(res[0]["text"])

+print(text) #馃憦Senior staff, Priipal Doris Jackson, Wakefield faculty, and, of course, my fellow classmates.I am honored to have been chosen to speak before my classmates, as well as the students across America today.

+```

+

+## API鏂囨。

+

+#### AutoModel聽瀹氫箟

+

+```plaintext

+model = AutoModel(model=[str], device=[str], ncpu=[int], output_dir=[str], batch_size=[int], hub=[str], **kwargs)

+```

+

+* `model`(str):聽[妯″瀷浠撳簱](https://github.com/alibaba-damo-academy/FunASR/tree/main/model_zoo)聽涓殑妯″瀷鍚嶇О锛屾垨鏈湴纾佺洏涓殑妯″瀷璺緞

+

+* `device`(str):聽`cuda:0`锛堥粯璁pu0锛夛紝浣跨敤聽GPU聽杩涜鎺ㄧ悊锛屾寚瀹氥�傚鏋滀负`cpu`锛屽垯浣跨敤聽CPU聽杩涜鎺ㄧ悊

+

+* `ncpu`(int):聽`4`聽锛堥粯璁わ級锛岃缃敤浜幝燙PU聽鍐呴儴鎿嶄綔骞惰鎬х殑绾跨▼鏁�

+

+* `output_dir`(str):聽`None`聽锛堥粯璁わ級锛屽鏋滆缃紝杈撳嚭缁撴灉鐨勮緭鍑鸿矾寰�

+

+* `batch_size`(int):聽`1`聽锛堥粯璁わ級锛岃В鐮佹椂鐨勬壒澶勭悊锛屾牱鏈釜鏁�

+

+* `hub`(str)锛歚ms`锛堥粯璁わ級锛屼粠modelscope涓嬭浇妯″瀷銆傚鏋滀负`hf`锛屼粠huggingface涓嬭浇妯″瀷銆�

+

+* `**kwargs`(dict):聽鎵�鏈夊湪`config.yaml`涓弬鏁帮紝鍧囧彲浠ョ洿鎺ュ湪姝ゅ鎸囧畾锛屼緥濡傦紝vad妯″瀷涓渶澶у垏鍓查暱搴β燻max_single_segment_time=6000`聽锛堟绉掞級銆�

+

+

+#### AutoModel聽鎺ㄧ悊

+

+```plaintext

+res = model.generate(input=[str], output_dir=[str])

+```

+

+* wav鏂囦欢璺緞,聽渚嬪:聽asr\_example.wav

+

+* pcm鏂囦欢璺緞,聽渚嬪:聽asr\_example.pcm锛屾鏃堕渶瑕佹寚瀹氶煶棰戦噰鏍风巼fs锛堥粯璁や负16000锛�

+

+* 闊抽瀛楄妭鏁版祦锛屼緥濡傦細楹﹀厠椋庣殑瀛楄妭鏁版暟鎹�

+

+* wav.scp锛宬aldi聽鏍峰紡鐨劼爓av聽鍒楄〃聽(`wav_id聽\t聽wav_path`),聽渚嬪:

+

+

+```plaintext

+asr_example1 ./audios/asr_example1.wav

+asr_example2 ./audios/asr_example2.wav

+

+```

+

+鍦ㄨ繖绉嶈緭鍏ヂ�

+

+* 闊抽閲囨牱鐐癸紝渚嬪锛歚audio,聽rate聽=聽soundfile.read("asr_example_zh.wav")`,聽鏁版嵁绫诲瀷涓郝爊umpy.ndarray銆傛敮鎸乥atch杈撳叆锛岀被鍨嬩负list锛毬燻[audio_sample1,聽audio_sample2,聽...,聽audio_sampleN]`

+

+* fbank杈撳叆锛屾敮鎸佺粍batch銆俿hape涓篭[batch,聽frames,聽dim\]锛岀被鍨嬩负torch.Tensor锛屼緥濡�

+

+* `output_dir`:聽None聽锛堥粯璁わ級锛屽鏋滆缃紝杈撳嚭缁撴灉鐨勮緭鍑鸿矾寰�

+

+* `**kwargs`(dict):聽涓庢ā鍨嬬浉鍏崇殑鎺ㄧ悊鍙傛暟锛屼緥濡傦紝`beam_size=10`锛宍decoding_ctc_weight=0.1`銆�

+

+

+璇︾粏鏂囨。閾炬帴锛歔https://github.com/modelscope/FunASR/blob/main/examples/README\_zh.md](https://github.com/modelscope/FunASR/blob/main/examples/README_zh.md)

+

+# 娉ㄥ唽琛ㄨ瑙�

+

+## 妯″瀷璧勬簮鐩綍

+

+

+

+**閰嶇疆鏂囦欢**锛歝onfig.yaml

+

+```yaml

+model: SenseVoiceLarge

+model_conf:

+ lsm_weight: 0.1

+ length_normalized_loss: true

+ activation_checkpoint: true

+ sos: <|startoftranscript|>

+ eos: <|endoftext|>

+ downsample_rate: 4

+ use_padmask: true

+

+encoder: SenseVoiceEncoder

+encoder_conf:

+ input_size: 128

+ attention_heads: 20

+ linear_units: 1280

+ num_blocks: 32

+ dropout_rate: 0.1

+ positional_dropout_rate: 0.1

+ attention_dropout_rate: 0.1

+ kernel_size: 31

+ sanm_shfit: 0

+ att_type: self_att_fsmn_sdpa

+ downsample_rate: 4

+ use_padmask: true

+ max_position_embeddings: 2048

+ rope_theta: 10000

+

+frontend: WhisperFrontend

+frontend_conf:

+ fs: 16000

+ n_mels: 128

+ do_pad_trim: false

+ filters_path: null

+

+tokenizer: SenseVoiceTokenizer

+tokenizer_conf:

+ vocab_path: null

+ is_multilingual: true

+ num_languages: 8749

+

+dataset: SenseVoiceDataset

+dataset_conf:

+ index_ds: IndexDSJsonl

+ batch_sampler: BatchSampler

+ batch_type: token

+ batch_size: 12000

+ sort_size: 64

+ max_token_length: 2000

+ min_token_length: 60

+ max_source_length: 2000

+ min_source_length: 60

+ max_target_length: 150

+ min_target_length: 0

+ shuffle: true

+ num_workers: 4

+ sos: ${model_conf.sos}

+ eos: ${model_conf.eos}

+ IndexDSJsonl: IndexDSJsonl

+

+train_conf:

+ accum_grad: 1

+ grad_clip: 5

+ max_epoch: 5

+ keep_nbest_models: 200

+ avg_nbest_model: 200

+ log_interval: 100

+ resume: true

+ validate_interval: 10000

+ save_checkpoint_interval: 10000

+

+optim: adamw

+optim_conf:

+ lr: 2.5e-05

+

+scheduler: warmuplr

+scheduler_conf:

+ warmup_steps: 20000

+

+```

+

+**妯″瀷鍙傛暟**锛歮odel.pt

+

+**璺緞瑙f瀽**锛歝onfiguration.json

+

+```json

+{

+ "framework": "pytorch",

+ "task" : "auto-speech-recognition",

+ "model": {"type" : "funasr"},

+ "pipeline": {"type":"funasr-pipeline"},

+ "model_name_in_hub": {

+ "ms":"",

+ "hf":""},

+ "file_path_metas": {

+ "init_param":"model.pt",

+ "config":"config.yaml",

+ "tokenizer_conf": {"vocab_path": "tokens.tiktoken"},

+ "frontend_conf":{"filters_path": "mel_filters.npz"}}

+}

+```

+

+## 娉ㄥ唽琛�

+

+### 鏌ョ湅娉ㄥ唽琛�

+

+```python

+from funasr.register import tables

+

+tables.print()

+```

+

+鏀寔鏌ョ湅鎸囧畾绫诲瀷鐨勬敞鍐岃〃锛歚tables.print("model")`

+

+

+### 鏂版敞鍐�

+

+```python

+from funasr.register import tables

+

+@tables.register("model_classes", "MinMo_S2T")

+class MinMo_S2T(nn.Module):

+ def __init__(*args, **kwargs):

+ ...

+

+ def forward(

+ self,

+ **kwargs,

+ ):

+

+ def inference(

+ self,

+ data_in,

+ data_lengths=None,

+ key: list = None,

+ tokenizer=None,

+ frontend=None,

+ **kwargs,

+ ):

+ ...

+

+```

+

+鍦╟onfig.yaml涓寚瀹氭柊娉ㄥ唽妯″瀷

+

+```yaml

+model: MinMo_S2T

+model_conf:

+ ...

+```

+

+## 娉ㄥ唽鍘熷垯

+

+* Model锛氭ā鍨嬩箣闂翠簰鐩哥嫭绔嬶紝姣忎竴涓ā鍨嬶紝閮介渶瑕佸湪funasr/models/涓嬮潰鏂板缓涓�涓ā鍨嬬洰褰曪紝涓嶈閲囩敤绫荤殑缁ф壙鏂规硶锛侊紒锛佷笉瑕佷粠鍏朵粬妯″瀷鐩綍涓璱mport锛屾墍鏈夐渶瑕佺敤鍒扮殑閮藉崟鐙斁鍒拌嚜宸辩殑妯″瀷鐩綍涓紒锛侊紒涓嶈淇敼鐜版湁鐨勬ā鍨嬩唬鐮侊紒锛侊紒

+

+* dataset锛宖rontend锛宼okenizer锛屽鏋滆兘澶嶇敤鐜版湁鐨勶紝鐩存帴澶嶇敤锛屽鏋滀笉鑳藉鐢紝璇锋敞鍐屼竴涓柊鐨勶紝鍐嶄慨鏀癸紝涓嶈淇敼鍘熸潵鐨勶紒锛侊紒

+

+

+# 鐙珛浠撳簱

+

+鍙互浣滀负鐙珛浠撳簱瀛樺湪锛岀敤浜庝唬鐮佷繚瀵嗭紝鎴栬�呯嫭绔嬪紑婧愩�傚熀浜庢敞鍐屾満鍒讹紝鏃犻渶闆嗘垚鍒癴unasr涓紝浣跨敤funasr杩涜鎺ㄧ悊锛屼篃鍙互鐩存帴杩涜鎺ㄧ悊锛屽悓鏍锋敮鎸乫inetune

+

+**浣跨敤AutoModel杩涜鎺ㄧ悊**

+

+```python

+from funasr import AutoModel

+

+# trust_remote_code锛歚True` 琛ㄧず model 浠g爜瀹炵幇浠� `remote_code` 澶勫姞杞斤紝`remote_code` 鎸囧畾 `model` 鍏蜂綋浠g爜鐨勪綅缃紙渚嬪锛屽綋鍓嶇洰褰曚笅鐨� `model.py`锛夛紝鏀寔缁濆璺緞涓庣浉瀵硅矾寰勶紝浠ュ強缃戠粶 url銆�

+model = AutoModel(

+ model="iic/SenseVoiceSmall",

+ trust_remote_code=True,

+ remote_code="./model.py",

+)

+```

+

+**鐩存帴杩涜鎺ㄧ悊**

+

+```python

+from model import SenseVoiceSmall

+

+m, kwargs = SenseVoiceSmall.from_pretrained(model="iic/SenseVoiceSmall")

+m.eval()

+

+res = m.inference(

+ data_in=f"{kwargs ['model_path']}/example/en.mp3",

+ language="auto", # "zh", "en", "yue", "ja", "ko", "nospeech"

+ use_itn=False,

+ ban_emo_unk=False,

+ **kwargs,

+)

+

+print(text)

+```

+

+寰皟鍙傝�冿細[https://github.com/FunAudioLLM/SenseVoice/blob/main/finetune.sh](https://github.com/FunAudioLLM/SenseVoice/blob/main/finetune.sh)

\ No newline at end of file

--

Gitblit v1.9.1