From 949a95986c3c24d6a152e1f204cfab8a0d9f1685 Mon Sep 17 00:00:00 2001

From: 游雁 <zhifu.gzf@alibaba-inc.com>

Date: 星期四, 31 十月 2024 16:29:40 +0800

Subject: [PATCH] docs: 移除Paraformer模型示例代码

---

docs/tutorial/Tables.md | 361 +++++++++++++++++++++++++++++++++++++++++++++++++++

docs/tutorial/Tables_zh.md | 18 --

2 files changed, 361 insertions(+), 18 deletions(-)

diff --git a/docs/tutorial/Tables.md b/docs/tutorial/Tables.md

new file mode 100644

index 0000000..3dfaa73

--- /dev/null

+++ b/docs/tutorial/Tables.md

@@ -0,0 +1,361 @@

+# FunASR-1.x.x聽Registration聽Tutorial

+

+The聽original聽intention聽of聽the聽funasr-1.x.x聽version聽is聽to聽make聽model聽integration聽easier.聽The聽core聽feature聽is聽the聽registry聽and聽AutoModel:

+

+* The聽introduction聽of聽the聽registry聽enables聽the聽development聽of聽building聽blocks聽to聽access聽the聽model,聽compatible聽with聽a聽variety聽of聽tasks;

+

+* The聽newly聽designed聽AutoModel聽interface聽unifies聽modelscope,聽huggingface,聽and聽funasr聽inference聽and聽training聽interfaces,聽and聽supports聽free聽download聽of聽repositories;

+

+* Support聽model聽export,聽demo-level聽service聽deployment,聽and聽industrial-level聽multi-concurrent聽service聽deployment;

+

+* Unify聽academic聽and聽industrial聽model聽inference聽training聽scripts;

+

+

+# Quick聽to聽get聽started

+

+## AutoModel聽usage

+

+### SenseVoiceSmall聽妯″瀷

+

+Input聽any聽length聽of聽voice,聽the聽output聽as聽the聽voice聽content聽corresponding聽to聽the聽text,聽the聽text聽has聽punctuation聽broken聽sentences,聽support聽Chinese,聽English,聽Japanese,聽Guangdong,聽Korean聽and聽5聽Chinese聽languages.聽\[Word-level聽timestamp聽and聽speaker聽identity\]聽will聽be聽supported聽later.

+

+```python

+from funasr import AutoModel

+from funasr.utils.postprocess_utils import rich_transcription_postprocess

+

+model = AutoModel(

+ model="iic/SenseVoiceSmall",

+ vad_model="fsmn-vad",

+ vad_kwargs={"max_single_segment_time": 30000},

+ device="cuda:0",

+)

+

+res = model.generate(

+ input=f"{model.model_path}/example/en.mp3",

+ language="auto", # "zn", "en", "yue", "ja", "ko", "nospeech"

+ use_itn=True,

+ batch_size_s=60,

+)

+text = rich_transcription_postprocess(res[0]["text"])

+print(text) #馃憦Senior staff, Priipal Doris Jackson, Wakefield faculty, and, of course, my fellow classmates.I am honored to have been chosen to speak before my classmates, as well as the students across America today.

+```

+

+## API聽documentation

+

+#### Definition聽of聽AutoModel

+

+```plaintext

+Model = AutoModel(model=[str], device=[str], ncpu=[int], output_dir=[str], batch_size= [int], hub=[str], **quargs)

+```

+

+* `model`(str):聽[Model聽Warehouse](https://github.com/alibaba-damo-academy/FunASR/tree/main/model_zoo)The聽model聽name聽in,聽or聽the聽model聽path聽in聽the聽local聽disk

+

+* `device`(str):聽`cuda:0`(Default聽gpu0),聽using聽GPU聽for聽inference,聽specified.聽If`cpu`Then聽the聽CPU聽is聽used聽for聽inference

+

+* `ncpu`(int):聽`4`(Default),聽set聽the聽number聽of聽threads聽used聽for聽CPU聽internal聽operation聽parallelism

+

+* `output_dir`(str):聽`None`(Default)聽If聽set,聽the聽output聽path聽of聽the聽output聽result

+

+* `batch_size`(int):聽`1`(Default),聽batch聽processing聽during聽decoding,聽number聽of聽samples

+

+* `hub`(str)锛歚ms`(Default)聽to聽download聽the聽model聽from聽modelscope.聽If`hf`To聽download聽the聽model聽from聽huggingface.

+

+* `**kwargs`(dict):聽All聽in`config.yaml`Parameters,聽which聽can聽be聽specified聽directly聽here,聽for聽example,聽the聽maximum聽cut聽length聽in聽the聽vad聽model.`max_single_segment_time=6000`(Milliseconds).

+

+

+#### AutoModel聽reasoning

+

+```plaintext

+Res = model.generate(input=[str], output_dir=[str])

+```

+

+* * wav聽file聽path,聽for聽example:聽asr\_example.wav

+

+ * pcm聽file聽path,聽for聽example:聽asr\_example.pcm,聽you聽need聽to聽specify聽the聽audio聽sampling聽rate聽fs聽(default聽is聽16000)

+

+ * Audio聽byte聽stream,聽for聽example:聽microphone聽byte聽data

+

+ * wav.scp,kaldi-style聽wav聽list聽(`wav_id聽\t聽wav_path`),聽for聽example:

+

+

+```plaintext

+Asr_example1./audios/asr_example1.wav

+Asr_example2./audios/asr_example2.wav

+

+```

+

+In聽this聽input

+

+* Audio聽sampling聽points,聽for聽example:`audio,聽rate聽=聽soundfile.read("asr_example_zh.wav")`Is聽numpy.ndarray.聽batch聽input聽is聽supported.聽The聽type聽is聽list:`[audio_sample1,聽audio_sample2,聽...,聽audio_sampleN]`

+

+* fbank聽input,聽support聽group聽batch.聽shape聽is聽\[batch,聽frames,聽dim\],聽type聽is聽torch.Tensor,聽for聽example

+

+* `output_dir`:聽None聽(default),聽if聽set,聽the聽output聽path聽of聽the聽output聽result

+

+* `**kwargs`(dict):聽Model-related聽inference聽parameters,聽e.g,`beam_size=10`,`decoding_ctc_weight=0.1`.

+

+

+Detailed聽documentation聽link:[https://github.com/modelscope/FunASR/blob/main/examples/README\_zh.md](https://github.com/modelscope/FunASR/blob/main/examples/README_zh.md)

+

+# Registry聽Details

+

+Take聽the聽SenseVoiceSmall聽model聽as聽an聽example,聽explain聽how聽to聽register聽a聽new聽model,聽model聽link:

+

+**modelscope锛�**[https://www.modelscope.cn/models/iic/SenseVoiceSmall/files](https://www.modelscope.cn/models/iic/SenseVoiceSmall/files)

+

+**huggingface锛�**[https://huggingface.co/FunAudioLLM/SenseVoiceSmall](https://huggingface.co/FunAudioLLM/SenseVoiceSmall)

+

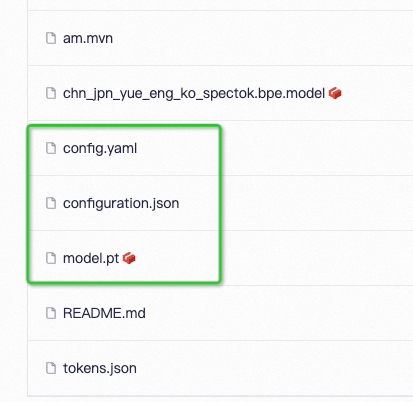

+## Model聽Resource聽Catalog

+

+

+

+Configuration聽File:聽config.yaml

+

+```yaml

+encoder: SenseVoiceEncoderSmall

+encoder_conf:

+ output_size: 512

+ attention_heads: 4

+ linear_units: 2048

+ num_blocks: 50

+ tp_blocks: 20

+ dropout_rate: 0.1

+ positional_dropout_rate: 0.1

+ attention_dropout_rate: 0.1

+ input_layer: pe

+ pos_enc_class: SinusoidalPositionEncoder

+ normalize_before: true

+ kernel_size: 11

+ sanm_shfit: 0

+ selfattention_layer_type: sanm

+

+

+model: SenseVoiceSmall

+model_conf:

+ length_normalized_loss: true

+ sos: 1

+ eos: 2

+ ignore_id: -1

+

+tokenizer: SentencepiecesTokenizer

+tokenizer_conf:

+ bpemodel: null

+ unk_symbol: <unk>

+ split_with_space: true

+

+frontend: WavFrontend

+frontend_conf:

+ fs: 16000

+ window: hamming

+ n_mels: 80

+ frame_length: 25

+ frame_shift: 10

+ lfr_m: 7

+ lfr_n: 6

+ cmvn_file: null

+

+

+dataset: SenseVoiceCTCDataset

+dataset_conf:

+ index_ds: IndexDSJsonl

+ batch_sampler: EspnetStyleBatchSampler

+ data_split_num: 32

+ batch_type: token

+ batch_size: 14000

+ max_token_length: 2000

+ min_token_length: 60

+ max_source_length: 2000

+ min_source_length: 60

+ max_target_length: 200

+ min_target_length: 0

+ shuffle: true

+ num_workers: 4

+ sos: ${model_conf.sos}

+ eos: ${model_conf.eos}

+ IndexDSJsonl: IndexDSJsonl

+ retry: 20

+

+train_conf:

+ accum_grad: 1

+ grad_clip: 5

+ max_epoch: 20

+ keep_nbest_models: 10

+ avg_nbest_model: 10

+ log_interval: 100

+ resume: true

+ validate_interval: 10000

+ save_checkpoint_interval: 10000

+

+optim: adamw

+optim_conf:

+ lr: 0.00002

+Scheduler: warmuplr

+Scheduler_conf:

+Warmup_steps: 25000

+

+```

+

+Model聽parameters:聽model.pt

+

+Path聽resolution:聽configuration.json聽(not聽required)

+

+```json

+{

+ "framework": "pytorch",

+ "task" : "auto-speech-recognition",

+ "model": {"type" : "funasr"},

+ "pipeline": {"type":"funasr-pipeline"},

+ "model_name_in_hub": {

+ "ms":"",

+ "hf":""},

+ "file_path_metas": {

+ "init_param":"model.Pt"

+"Config": "config.yaml"

+Languagename_conf: {"bpemodel": "chn_jpn_yue_eng_spectok.bpe.Model"},

+"Frontend_conf":{"cmvn_file": "am.mvn"}}

+}

+```

+

+The聽function聽of聽configuration.json聽is聽to聽add聽the聽model聽root聽directory聽to聽the聽item聽in聽file\_path\_metas,聽so聽that聽the聽path聽can聽be聽correctly聽parsed.聽For聽example,聽assume聽that聽the聽model聽root聽directory聽is:/home/zhifu.gzf/init\_model/SenseVoiceSmall,The聽relevant聽path聽in聽config.yaml聽in聽the聽directory聽is聽replaced聽with聽the聽correct聽path聽(ignoring聽irrelevant聽configuration):

+

+```yaml

+Init_param: /home/zhifu.gz F/init_model/sensevoicemail Mall/model.pt

+

+Tokenizer_conf:

+Bmodeler: /home/Zhifu.gzf/init_model/SenseVoiceSmall/chn_jpn_yue_eng_ko_spectok.bpe.model

+

+frontend_conf:

+ cmvn_file: /home/zhifu.Gzf/init_model/SenseVoiceSmall/am.mvn

+```

+

+## Registry

+

+

+

+### View聽Registry

+

+```plaintext

+from funasr.register import tables

+

+tables.print()

+```

+

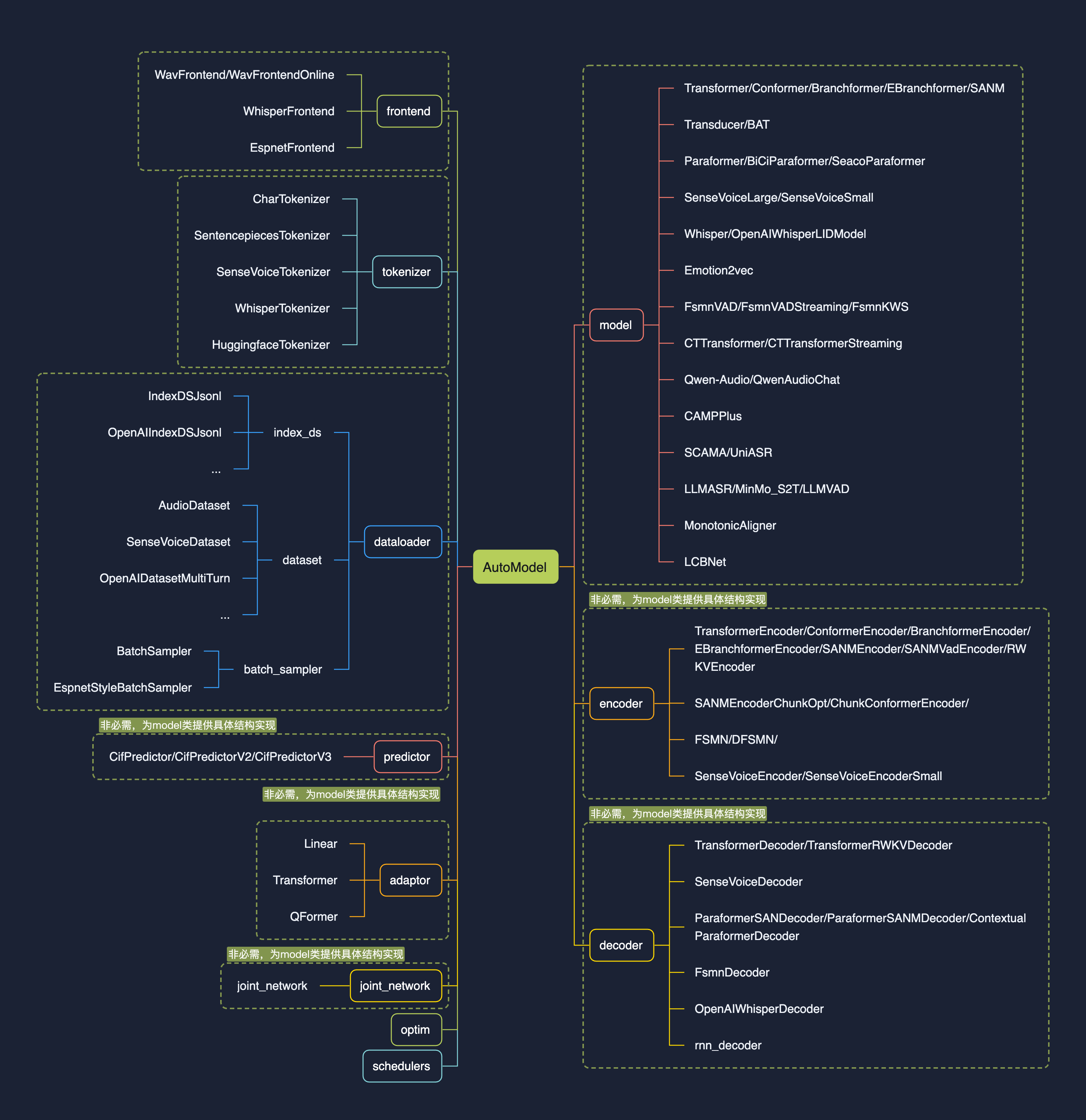

+Support聽to聽view聽the聽specified聽type聽of聽Registry:聽'tables.print("model")',聽currently聽funasr聽has聽registered聽model聽as聽shown聽in聽the聽figure聽above.聽The聽following聽categories聽are聽currently聽predefined:

+

+```python

+ model_classes = {}

+ frontend_classes = {}

+ specaug_classes = {}

+ normalize_classes = {}

+ encoder_classes = {}

+ decoder_classes = {}

+ joint_network_classes = {}

+ predictor_classes = {}

+ stride_conv_classes = {}

+ tokenizer_classes = {}

+ dataloader_classes = {}

+ batch_sampler_classes = {}

+ dataset_classes = {}

+ index_ds_classes = {}

+```

+

+### Registration聽Model

+

+```python

+from funasr.register import tables

+

+@tables.register("model_classes", "SenseVoiceSmall")

+class SenseVoiceSmall(nn.Module):

+ def __init__(*args, **kwargs):

+ ...

+

+ def forward(

+ self,

+ **kwargs,

+ ):

+

+ def inference(

+ self,

+ data_in,

+ data_lengths=None,

+ key: list = None,

+ tokenizer=None,

+ frontend=None,

+ **kwargs,

+ ):

+ ...

+

+```

+

+Add聽@聽tables.register("model\_classes",聽"SenseVoiceSmall")聽before聽the聽name聽of聽the聽class聽to聽be聽registered.聽The聽class聽needs聽to聽implement聽the聽following聽methods:\_\_init聽\_\_,聽forward,聽and聽inference.

+

+register聽Usage:

+

+```python

+@ tables.register("registration classification", "registration name")

+```

+

+Among聽them,聽"registration聽classification"聽can聽be聽a聽predefined聽classification聽(see聽the聽figure聽above).聽If聽it聽is聽a聽new聽classification聽defined聽by聽oneself,聽the聽new聽classification聽will聽be聽automatically聽written聽into聽the聽registry聽classification.聽"registration聽name"聽means聽the聽name聽you聽want聽to聽register聽and聽can聽be聽used聽directly聽in聽the聽future.

+

+Full聽code:[https://github.com/modelscope/FunASR/blob/main/funasr/models/sense\_voice/model.py#L443](https://github.com/modelscope/FunASR/blob/main/funasr/models/sense_voice/model.py#L443)

+

+After聽the聽registration聽is聽complete,聽specify聽the聽new聽registration聽model聽in聽config.yaml聽to聽define聽the聽model.

+

+```python

+model: SenseVoiceSmall

+model_conf:

+ ...

+```

+

+### Registration聽failed

+

+If聽the聽registration聽model聽or聽method聽is聽not聽found,聽assert聽model\_class聽is聽not聽None,聽f'{kwargs\["model"\]}聽is聽not聽registered聽'.聽The聽principle聽of聽model聽registration聽is聽to聽import聽the聽model聽file,You聽can聽view聽the聽specific聽reason聽for聽the聽registration聽failure聽through聽import.聽For聽example,聽the聽preceding聽model聽file聽is聽funasr/models/sense\_voice/model.py:

+

+```python

+from funasr.models.sense_voice.model import *

+```

+

+## Principles聽of聽Registration

+

+* Model:聽models聽are聽independent聽of聽each聽other.聽Each聽Model聽needs聽to聽create聽a聽new聽Model聽directory聽under聽funasr/models/.聽Do聽not聽use聽class聽inheritance聽method!!!聽Do聽not聽import聽from聽other聽model聽directories,聽and聽put聽everything聽you聽need聽into聽your聽own聽model聽directory!!!聽Do聽not聽modify聽the聽existing聽model聽code!!!

+

+* dataset,frontend,tokenizer,聽if聽you聽can聽reuse聽the聽existing聽one,聽reuse聽it聽directly,聽if聽you聽cannot聽reuse聽it,聽please聽register聽a聽new聽one,聽modify聽it聽again,聽and聽do聽not聽modify聽the聽original聽one!!!

+

+

+# Independent聽warehouse

+

+It聽can聽exist聽as聽a聽stand-alone聽repository聽for聽code聽secrecy,聽or聽as聽a聽stand-alone聽open聽source.聽Based聽on聽the聽registration聽mechanism,聽you聽do聽not聽need聽to聽integrate聽it聽into聽funasr.聽You聽can聽also聽use聽funasr聽for聽inference,聽and聽you聽can聽also聽directly聽perform聽inference.聽finetune聽is聽also聽supported.

+

+**Using聽AutoModel聽for聽inference**

+

+```python

+from funasr import AutoModel

+

+# trust_remote_code:'True' means that the model code implementation is loaded from 'remote_code', 'remote_code' specifies the location of the 'model' specific code (for example,'model.py') in the current directory, supports absolute and relative paths, and network url.

+model = AutoModel (

+model="iic/SenseVoiceSmall ",

+trust_remote_code=True

+remote_code = "./model.py",

+)

+```

+

+**Direct聽inference**

+

+```python

+from model import SenseVoiceSmall

+

+m, kwargs = SenseVoiceSmall.from_pretrained(model="iic/SenseVoiceSmall")

+m.eval()

+

+res = m.inference(

+ data_in=f"{kwargs ['model_path']}/example/en.mp3",

+ language="auto", # "zh", "en", "yue", "ja", "ko", "nospeech"

+ use_itn=False,

+ ban_emo_unk=False,

+ **kwargs,

+)

+

+print(text)

+```

+

+Trim聽reference:[https://github.com/FunAudioLLM/SenseVoice/blob/main/finetune.sh](https://github.com/FunAudioLLM/SenseVoice/blob/main/finetune.sh)

\ No newline at end of file

diff --git a/docs/tutorial/Tables_zh.md b/docs/tutorial/Tables_zh.md

index 576b408..8142669 100644

--- a/docs/tutorial/Tables_zh.md

+++ b/docs/tutorial/Tables_zh.md

@@ -15,24 +15,6 @@

## 鍩轰簬automodel鐢ㄦ硶

-### Paraformer妯″瀷

-

-杈撳叆浠绘剰鏃堕暱璇煶锛岃緭鍑轰负璇煶鍐呭瀵瑰簲鏂囧瓧锛屾枃瀛楀叿鏈夋爣鐐规柇鍙ワ紝瀛楃骇鍒椂闂存埑锛屼互鍙婅璇濅汉韬唤銆�

-

-```python

-from funasr import AutoModel

-

-model = AutoModel(model="paraformer-zh",

- vad_model="fsmn-vad",

- vad_kwargs={"max_single_segment_time": 60000},

- punc_model="ct-punc",

- # spk_model="cam++"

- )

-wav_file = f"{model.model_path}/example/asr_example.wav"

-res = model.generate(input=wav_file, batch_size_s=300, batch_size_threshold_s=60, hotword='榄旀惌')

-print(res)

-```

-

### SenseVoiceSmall妯″瀷

杈撳叆浠绘剰鏃堕暱璇煶锛岃緭鍑轰负璇煶鍐呭瀵瑰簲鏂囧瓧锛屾枃瀛楀叿鏈夋爣鐐规柇鍙ワ紝鏀寔涓嫳鏃ョ菠闊�5涓瑷�銆傘�愬瓧绾у埆鏃堕棿鎴筹紝浠ュ強璇磋瘽浜鸿韩浠姐�戝悗缁細鏀寔銆�

--

Gitblit v1.9.1